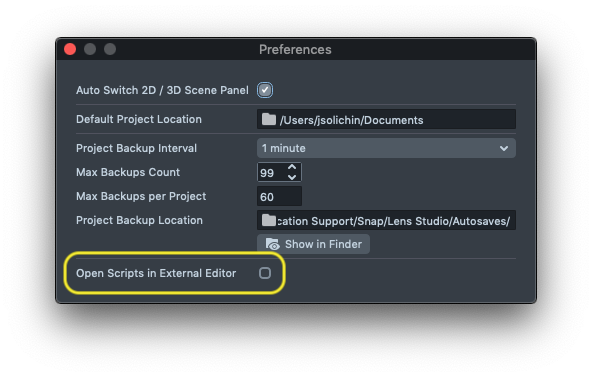

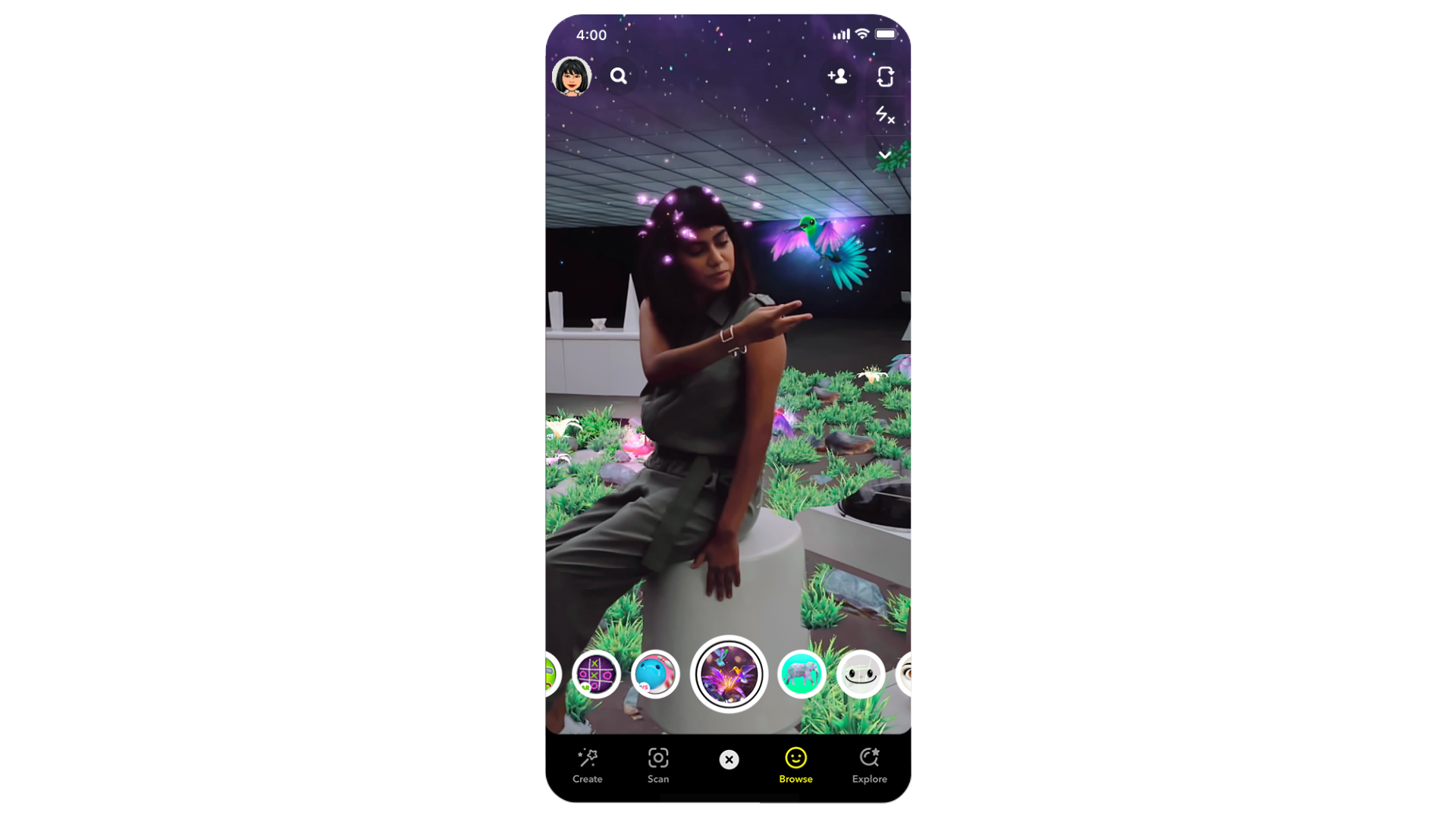

It also fires directly after you bind the function to give you the current status. This event is triggered when the player enters or exits the geofence of the set location. The float radius in meters of the locationĭetermines whether or not user is in the radial boundary of the location Inherited Properties event.latitude : Float This event is triggered when the player enters or exits the radius of the set location. Open File -> Project Info and check “Allow Experimental API.”.Open Lens Studio -> Preferences and check “Allow Experimental API.” Now the “Allow Experimental API” option will show in Project Info.In your Lens Studio project, you must enable experimental features: The experimental features in this API require that you update to Lens Studio 4.4.1 or later. Make sure you have updated to the latest version of the Snapchat App on your phone, the Spectacles firmware, and Lens Studio. Travel Guide: trigger content when a player arrives at a location.Location Trigger is a new, experimental feature, and we are actively exploring new use cases. It will not run on your phone or in Lens Studio. NOTE: Location Trigger runs on custom API for Spectacles 2021. The location feature polls the location of the player’s phone, and fires an event when the player is within a given radius of the geographic coordinates. Replace or augment eyes in full 3D, including realistic gaze tracking.This template allows you to trigger content based on the player’s geographic location. Grey Shadows be gone! Use any color of the rainbow to add depth to your Lens. We’ve updated Lens Studio’s UI and added two themes to make it easier to customize and navigate.Īccess and create textures from script with Procedural Texture. In partnership with Wannaby, we’ve created a foot tracking template powered by their ML model that allows anyone to easily create Lenses that interact with your feet! Use Lens Studio’s new facial expression tracking to drive blendshapes on 3D models. Trigger effects by tracking specific coordinates on the face.

Use different hand poses like open palm, closed palm, and more to trigger custom effects. Use these templates as a starting point for integrating additional models, or add your own. Replace the ground with a material and occlude objects not on the ground.

Understand what’s in a scene and react to what the camera sees. Use a custom segmentation mask to apply effects to certain parts of a scene.Īttach an image to a custom detected object. What will you create?Īpply an art style to the camera feed using Machine Learning to alter the world as you see it. By adding these ML models to your Lenses, the Snapchat Camera will be even better at transforming the world around you! Not an ML developer? Don’t worry, we’ve added templates to this new version of Lens Studio so anyone can get started. With SnapML, you can now create your own Lens features with neural networks that you have trained. Today at the Snap Partner Summit we announced even more ways for creators to transform their world! You can now integrate your own machine learning models into Lens Studio with SnapML.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed